This post will walk through an inplace upgrade of VMware Site Recovery Manager (SRM) to version 8.1, which introduces support for the vSphere HTML5 client and recovery / migration to VMware on AWS. Read more about what’s new in this blog post. The upgrade is relatively simple but we need to cross-check compatibility and perform validation tests after running the upgrade installer.

Planning

- The Site Recovery Manager upgrade retains configuration and information such as recovery plans and history but does not preserve any advanced settings

- Protection groups and recovery plans also need to be in a valid state to be retained, any invalid configurations or not migrated

- Check the upgrade path here, for Site Recovery Manager 8.1 we can upgrade from 6.1.2 and later

- If vSphere Replication is in use then upgrade vSphere Replication first, following the steps outlined here

- Site Recovery Manager 8.1 is compatible with vSphere 6.0 U3 onwards, and VMware Tools 10.1 and onwards, see the compatibility matrices page here for full details

- Ensure the vCenter and Platform Services Controller are running and available

- In Site Recovery Manager 8.1 the version number is decoupled from vSphere, however check that you do not need to perform an upgrade for compatibility

- For other VMware products check the product interoperability site here

- If you are unsure of the upgrade order for VMware components see the Order of Upgrading vSphere and Site Recovery Manager Components page here

- Make a note of any advanced settings you may have configured under Sites > Site > Manage > Advanced Settings

- Confirm you have Platform Services Controller details, the administrator@vsphere.local password, and the database details and password

Download the VMware Site Recovery Manager 8.1.0.4 self extracting installer here to the server, and if applicable; the updated Storage Replication Adapter (SRA) – for storage replication. Review the release notes here, and SRM upgrade documentation centre here.

Database Backup

Before starting the upgrade make sure you take a backup of the embedded vPostgres database, or the external database. Full instructions can be found here, in summary:

- Log into the SRM Windows server and stop the VMware Site Recovery Manager service

- From command prompt run the following commands, replacing the db_username and srm_backup_name parameters, and the install path and port if they were changed from the default settings

cd C:\Program Files\VMware\VMware vCenter Site Recovery Manager Embedded Database\binpg_dump -Fc --host 127.0.0.1 --port 5678 --username=db_username srm_db > srm_backup_name- If you need to restore the vPostgres database follow the instructions here

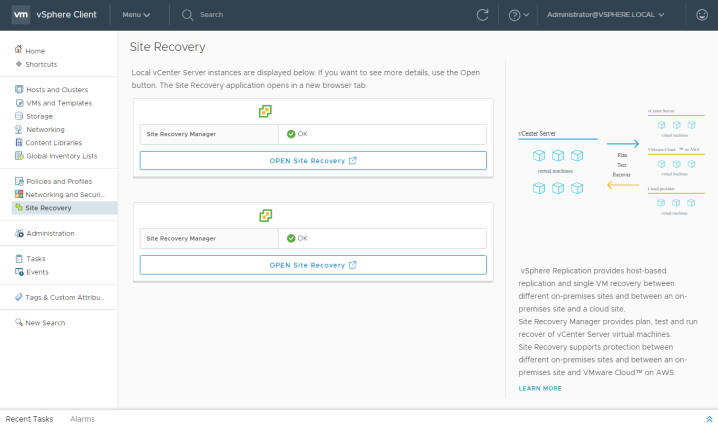

In addition to backing up the database check the health of the SRM servers and confirm there are no pending reboots. Log into the vSphere web client and navigate to the Site Recovery section, verify there are no pending cleanup operations or configuration issues, all recovery plans and protection groups should be in a Ready state.

Process

As identified above, vSphere Replication should be upgraded before Site Recovery Manager. In this instance we are using Nimble storage replication, so the Storage Replication Adapter (SRA) should be upgraded first. Download and run the installer for the SRA upgrade, in most cases it is a simple next, install, finish.

We can now commence the Site Recovery Manager upgrade, it is advisable to take a snapshot of the server and ensure backups are in place. On the SRM server run the executable downloaded earlier.

- Select the installer language and click Ok, then Next

- Click Next on the patent screen, accept the EULA and click Next again

- Double-check you have performed all pre-requisite tasks and click Next

- Enter the FQDN of the Platform Services Controller and the SSO admin password, click Next

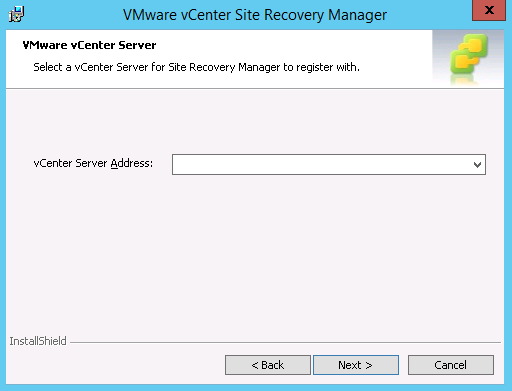

- The vCenter Server address is auto-populated, click Next

- The administrator email address and local host ports should again be auto-populated, click Next

- Click Yes when prompted to overwrite registration

- Select the appropriate certificate option, in this case keeping the existing certificate, click Next

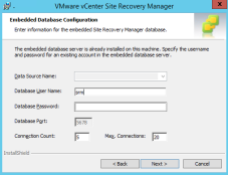

- Check the database details and enter the password for the database account, click Next

- Configure the service account to run the SRM service, again this will be retain the existing settings by default, click Next

- Click Install and Finish once complete

Post-Upgrade

After Site Recovery Manager is upgraded log into the vSphere client. If the Site Recovery option does not appear immediately you may need to clear your browser cache, or restart the vSphere client service.

On the summary page confirm both sites are connected, you may need to reconfigure the site pair if you encounter connection problems.

Validate the recovery plan and run a test to confirm there are no configuration errors.

The test should complete successfully.

I can also check the replication status and Storage Replication Adapter status.